Natural Science - Year II

Unit 67: Systems and States

History Weblecture for Unit 67

| This Unit's | Homework Page | History Lecture | Science Lecture | Lab | Parents' Notes |

History Lecture for Unit 67: Systems

For Class

- Period: 1400-1970

For the interactive timelines, click on an image to bring it into focus and read notes.

Click on the icon to bring up the timeline in a separate browser window. You can then resize the window to make it easier to read the information.Click here: Timeline PDF to bring up the timeline as a PDF document. You can then click on the individual events to see more information if you want. Exploring this version of the timeline is optional!

- Geographic Location: Europe and the United States

- People to know: Ludwig von Bertalanffy, Kenneth Boulding, Anatol Rapoport, Ervin László, Fritjof Capra, Stephen Wolfram.

- See science topics: Systems Science

Lecture:

Systems Science

The rapid progress of science in the twentieth century forced increasing specialization in every field, which isolated scientists in biology from physicists and astronomers. It became increasingly harder to come up with a unified scientific view of the universe.

Advances in thermodynamics and chemistry also challenged earlier concepts of scientific knowledge, especially the methods of reductionism and analysis that underlie classical approaches to nature. Science in the much of twentieth century followed classical methods, even when dealing with radical concepts like quantum mechanics and relativity, and attempted to find the causes driving a sequence of events by investigating the behavior of individual objects participating iin isolation from each other from their environment.

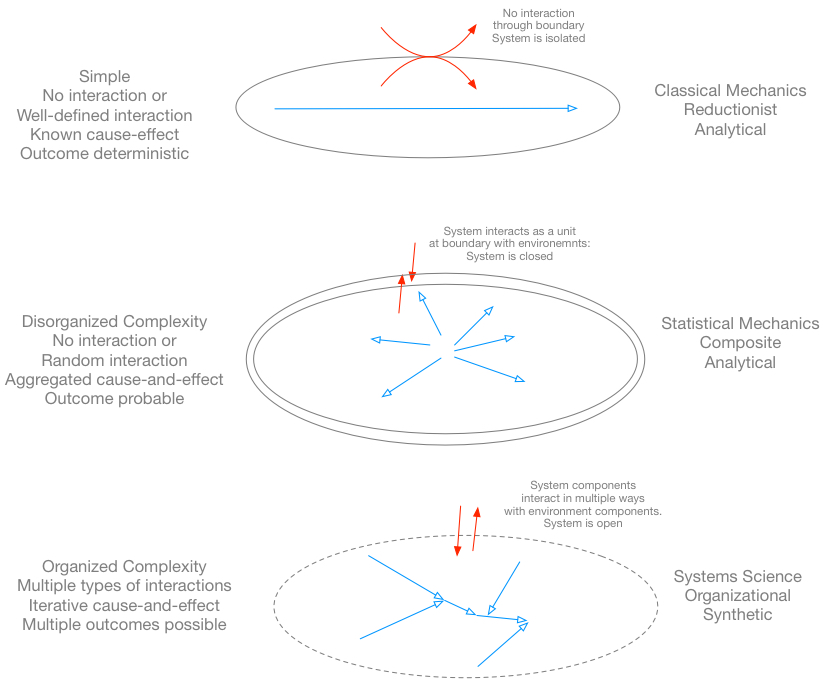

Only "simple" situations are really subject to the mathematics of analysis developed for classical mechanics, which extrapolates behavior based on known, fundamental laws. As we've seen, gas molecules form a "disorganized complexity" (disorganized because the relationships are random) that must be addressed with statistical mechanics, but even then, the gas molecules are assumed to interact according to strict relationships based on random distributions, or not at all, and we can calculate an average behavior (assuming that randomness cancels out extremes) with some accuracy.

But there are other situations which cannot be analyzed with either method, because in these cases, the factors affecting behavior of individual components of the group are not random, but governed by complex relationships and interaction between the different objects in the situation. These include the relationship between the genetic constitution of the cell and the behavior of the adult organism, the interrelationship between economic markets and individual economic behavior, the influence of specific weather systems on the global climate, and patterns of thought and memory in the human brain. In such "organized complexity", where relationships have rules but the rules change depending on circumstances, we need a new way of recording and analyzing our observations.

Developing a General Systems Theory

The development of a generic "systems science" approach that could be used in many fields occurred through changes that reflected new generations of scientists.

The first "generation" of scientists who deliberately tried to develop and use a systems approach came from different natural and social sciences. Ludwig von Bertalanffy recognized a difference between closed systems with definite boundaries, and open systems subject to many influences, some not obvious. Because was not allowed to leave Austria after the Anschluss (takeover by Germany) in 1938, he became a member of the Nazi party, and head of the University of Vienna during World War II. He migrated to Canada after World War Ii and did most of his work on general systems theory there. Because of his former Nazi associations, he kept a low profile in scientific circles, but his work in systems theory has influenced every area of science over the last five decades, especially information science and engineering.

Bertalanffy developed the individual growth model to account for population growth in ecosystems and cell growth in indviduals. It uses the equation

The equation says that the change Δ in a value measuring growth as a function of time L(t) depends on some rate r, a limiting or final value LT, and the function itself. This kind of relationship is an iterative relationship: each new value depends on the calculations for the last step, and the result of that step becomes part of the formula for the next step. A similar example would be the growth of interest on a bank savings account, where the sum of today's interest and yesterday's principle becomes the new principle for calculating tomorrow's interest.

The other major concept Bertalanffy introduced was the idea of "open systems". We talked about "closed systems" with regard to the development of gas laws and the principles of thermodynamics. Bertalanffy recognized the usefulness of this approach for many situations, but did not think it could be used to adequately explain living organisms, which constantly interact with their environments in complex ways, whether we consider cells within tissues, tissues within organs, or organisms within their ecosystems. One of the implications of an open system is that energy input to the system can be limitless. For example, the Earth receives far more solar energy than ecosystems at the Earth's surface can use.

Most of Bertalanffy's early works were in German, but his mature theories were published in English, and include the books Robots, Men, and Minds: Psychology in the Modern World, and General System Theory: Foundations, Development, Applications.

Another contributor to the development of General Systems Theory was Kenneth E. Boulding, who joined the Society of Friends (Quakers) after he immigrated to the United States, and became a pacifist and social activist. Where Francis Bacon had assumed unlimited resources and possibilities in the 17th Century, Boulding realized that Earth could not support unlimited growth, and that continued technical development needed to emphasize sustainable resources. One of his most popular and influential works was The Economics of the Coming Spaceship Earth. Influenced by Rachel Carson's Silent Spring with its emphasis on interconnections in ecosystems, Boulding's economical works focussed on fitting economic theory and prediction within the limited resources of "Spaceship Earth". His theories suggested that scientists could no longer work in isolated fields of specialization. Economists needed to pay attention to biology, biologists to chemists, astronomers to historians, in order to come up with theories that actually fit the mass of data available.

In 1954, Boulding, Bertananffly, and several others joined with Anatol Rapoport to form the Society for General Systems Research. Abandoning a musical career because the rise of Nazism made study in Europe impossible for a Jewish pianist, Rapaport emigrated to the United States and began a career as a mathematician, studying at the University of Chicago, and after World War II (when he served in the US Army Air Corps), he worked at universities in Michigan and Toronto, developing what we now call game theory, a way of dealing with complex interactions among different independent players who could exhibit free will. He attempted to write a software program that would play the following game, developed at Rand in 1950 as a game theory test:

Two partners in crime, A and B, are each in solitary confinement in isolation cells, unable to communicate. The prosecutors, who have insufficient evidence to convict either prisoner on the primary charge with a long prison sentence, at least hope to get them sentenced to one year on a lesser charge for which they do have evidence. They simultaneously offer the prisoners a bargain:

- If A and B each betrays the other on the primary charge, they will each serve two years in prison.

- If A betrays B but B remains silent, A will be set free and B will serve 3 years in prison (and vice-versa).

- If A and B both remain silent, both of them will serve only one year in prison on the lesser charge.

The laws of self-interest should lead each prisoner acting alone to chose option #2, hoping to be set free, which of course will actually lead to option #1. If each prisoner acts altruistically and refuses to betray his partner, both will wind up in situation #3, serving only the time for the lesser charge. In the iterated version of this game, the players are offered the choice over and over, knowing the previous choice made by the other prisoner.

Rapaport's solution for player A for the iterated version was four lines long. It basically requires player A to remain silent (option #3) on the first move, then do whatever player B has just done in every subsequent move. In other words, A acts generously to start, then returns "tit-for-tat" for each choice B makes.

The interesting thing is that in the long run, Rapaport's strategy works better than a purely selfish strategy, or a purely generous strategy (A constantly stays silent despite B's choices). In Rapaport's strategy, cooperation is rewarded and defection punished, with the result that both sides wind up cooperating together. The rules are

- Be nice (don't be the first to betray your partner)

- Retaliate (betray if you are betrayed)

- Forgive (don't betray if you haven't been betrayed in the last cycle)

- Don't be envious (don't try to outscore your partner/opponent)

According to the game theorists, this strategy actually turns out to be pretty good rules for adversarial situations of any kind. It avoids exploitation of one side by the other, but also prevents ongoing vendettas. It rewards people for doing the right thing and in real world situations, it builds trust.

Obviously, systems science goes far beyond the traditional realms of "hard" sciences, but it has many applications to complex physical systems as well.

Two more recent contributors to General Systems Theory are Ervin László and Fritjof Capra. László proposed that information is the substance of the universe; matter is merely the means of containing it. Among the implications of this are that the universe self-adjusts to form conscious lifeforms, and that evolution is an informed, rather than purely random, process. Capra's works emphasize connections between physics and metaphysics: he insists that either approach will result in the same answers, and that science and religion produce compatible views of the universe. His books include The Dao of Physics, which draws parallels between concepts in Oriental mystical traditions and modern physics, and The Turning Point: Science, Society, and the Rising Culture, which argues that science needs to develop holistic, systems approaches to new research and data, rather than rely on classical reductionism and rigid paradigms.

The Definition of Complexity

Defining complexity raises some significant issues. We start by defining a system as any group of objects we might want to consider, separated by a boundary from the rest of the universe or the environment. Elements within the boundary are components of the system and we can study their interactions with each other. The system as a whole can interact with its environment, but we generally don't examine interactions between components of the system and objects in the system's environment independently. If we really need to do that, we expand the boundaries of the system to include the objects as part of the system!

Areas where a systems approach has helped develop new understanding include chaos theory, cybernetics, control theory, operations research, biology, ecology, engineering, and psychology. In each of these areas, complex interactions and multiple outcomes make standard scientific analysis difficult.

An American physicist and entrepreneur, Stephen Wolfram, has proposed a twist on the General Systems Theory. Wolfram was born in England, and a bit like Bill Gates, dropped out of his local school, Eton College, and St. John's College in Oxford one after the other because he felt unchallenged. He received his doctorate in physics from the California Institute of Technology in 1980, at the age of 20. Wolfram began investigating whether knowledge itself could be computational, that is, whether applying rules to massive amounts of data (available by joining different data-gathering systems together and "mining" these data stores) could produce new models, perhaps even models that would explain natural phenomena such as quantum physics. Knowledge engines like Wolfram Alpha and IBM's Watson (which are systems of computers, not single computers) are self-analyzing. By looking at how the users interact with these systems, the designers and the systems themselves create even more data which becomes part of the information the system can use for the next question.

Wolfram developed the concept of computational irreducibility, the idea that some systems which are based on relatively simple rules, when taken through enough iterations, produce results that cannot necessarily be easily analyzed to discover the rules. He studied cellular automata: systems in which cell patterns reproduce new cell patterns based on specific rules.

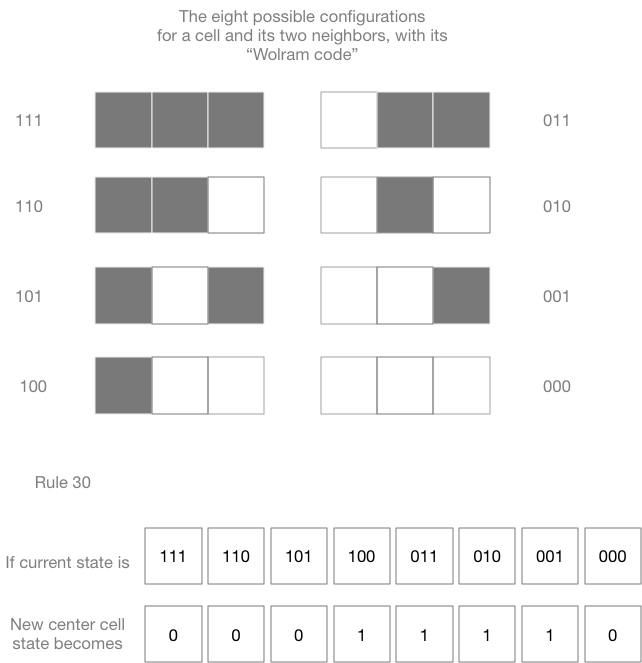

If we consider a single cell in a line of cells, each cell has two neighbors. There are only eight possible configurations of these neighbors, which can be identified in binary language. Applied this way, the binary representations of 0-7 become a code. We can then make up a rule that dictates how each 3-cell pattern produces some outcome for the central cell of the pattern.

We can represent this rule graphically, as shown below. Each three-cell group gives a single cell, the new state of the central cell of the group. There are 256 possible sets of rules, and some of them produce very interesting patterns. For example, rule 30, shown below, results in a complex pattern over just a few iterations.

Watch Wolfram's exploration on computing a theory of knowledge in his TED talk from 2010 (about 20 minutes).

- What applications does Wolfram see for this kind of analysis of nature?

- What are some of the advantages of the kind of data mining he demonstrates with Wolfram Alpha? What are some of the disadvantages?

- This talk was given in 2010. Have any of Wolfram's predictions about the use of data mining been realized so far?

Study/Discussion Questions:

- What kinds of problems might systems analysis be able to address?

- What limitations apply to this kind of "science"?

- How does one study a particular system?

On your own

- An example of cellular automation is John Conway's Game of Life, which you can play on his website. This game extends the cellular automaton rules into two dimensions.

© 2005 - 2025 This course is offered through Scholars Online, a non-profit organization supporting classical Christian education through online courses. Permission to copy course content (lessons and labs) for personal study is granted to students currently or formerly enrolled in the course through Scholars Online. Reproduction for any other purpose, without the express written consent of the author, is prohibited.